Real Estate SEO: Master MLS Data for AI Search Visibility

The Source Code of Real Estate SEO: How MLS Data Standardization Dictates Your AI Search Visibility

By Dean Cacioppo

Why do Zillow, Redfin, and the major portals dominate search results for your own listings? It’s not just about their ad budget. They’ve cracked the code that most brokerages overlook: the source code of real estate SEO is written in your MLS data. And in the age of AI, mastering this code is the only way to compete.

As someone who has not only built digital platforms for top brokerages but has also sat on the committees that helped shape MLS governance and IDX policy, I’ve seen the disconnect firsthand. Brokerages invest heavily in beautiful websites, expecting them to be lead-generation machines, only to find themselves invisible in search. The frustration is palpable, but the solution isn’t another blog post or a bigger keyword budget. The solution is to fundamentally rethink your website’s relationship with data. It’s time to stop renting visibility from the portals and start building a digital asset that Google—and its AI—recognizes as the ultimate authority.

Key Takeaways

- Generic IDX Feeds Are an SEO Liability: Most real estate websites use standard IDX feeds that create a “sea of sameness” online. This leads to duplicate content issues that dilute your authority and make it nearly impossible for search engines to see your site as the definitive source for a listing.

- Data Standardization is Non-Negotiable: Clean, standardized MLS data, governed by standards like those from the Real Estate Standards Organization (RESO), is the foundation of modern SEO. It transforms ambiguous text into structured entities that search engines and AI can understand, categorize, and trust.

- AI Search Prioritizes Structured Data: The rise of AI-powered search, like Google’s Search Generative Experience (SGE), has made old SEO playbooks obsolete. AI doesn’t just rank links; it synthesizes information to provide direct answers. If your data isn’t perfectly structured, the AI will pull its answer from a source that is—likely Zillow or Redfin.

- Your Brokerage Needs a Proprietary Knowledge Graph: The ultimate competitive advantage is to go beyond the standard MLS feed. By enriching standardized data with advanced schema markup and proprietary content (like neighborhood videos or agent insights), you can build a unique knowledge graph that establishes your brokerage as the irrefutable local expert.

The Great Real Estate SEO Illusion: Why Your Website is Invisible

The common frustration I hear from brokers and agents is a story of unfulfilled potential. You invest in a sleek, modern website with a full IDX feed, showcasing every listing in your market. You’re told it’s the key to capturing online leads. Yet, weeks and months later, organic traffic is a trickle, and when you search for your own exclusive listing, Zillow, Redfin, and Realtor.com are staring back at you from the top of the page.

This isn’t an accident; it’s a systemic problem rooted in how most real estate websites are built.

How Generic IDX Feeds Hurt Your SEO

The vast majority of agent and brokerage websites are powered by generic IDX solutions. While convenient, these feeds create a massive “sea of sameness.” The same listing description, the same photos, and the same basic data are duplicated across hundreds, sometimes thousands, of websites in the same market.

From a search engine’s perspective, this is chaos. When Google’s crawlers encounter the exact same content on 500 different websites, they face a critical question: which one is the original, authoritative source? More often than not, they default to the sites with the highest overall domain authority—the national portals. This results in:

- Duplicate Content Penalties: While not a “penalty” in the traditional sense, Google will choose to index and rank only one version of the content, effectively rendering the other identical pages invisible.

- Content Dilution: Your website’s authority is spread thin. Instead of having one powerful, unique page for a listing, you have one page among a sea of clones, diminishing its value in the eyes of search algorithms.

Beyond Keywords and Blog Posts

For years, the conventional SEO wisdom was to fight this with more content. “Start a blog!” “Write about local neighborhoods!” “Target long-tail keywords!” While well-intentioned, this is an outdated strategy for a modern search landscape. You cannot out-blog a fundamental structural problem.

The real battle for visibility isn’t won at the blog post level; it’s won at the data structure level. The portals understand this. They aren’t just displaying your MLS data; they are re-structuring, standardizing, and enriching it to make it perfectly legible for machines.

Unlocking the Source Code: What is MLS Data Standardization?

To compete, you must first understand the language search engines speak. That language is structured data, and its grammar is defined by data standardization.

From Chaos to Clarity: The Role of RESO and Data Dictionaries

In simple terms, MLS data standardization is the process of creating a universal, consistent language for real estate information. It’s the work done by organizations like the Real Estate Standards Organization (RESO) to ensure that a field like “Square Footage” is always represented as SqFt and not a dozen variations like “SF,” “sq. ft.,” “Square Feet,” or “sqft.”

This may seem like a minor technical detail, but its impact is monumental. Having sat on the committees that helped shape these standards, I saw firsthand how structured data was the key to future visibility. When every data point is consistent and predictable, it can be accurately interpreted by machines. It turns a chaotic mess of text into a clean, organized, and reliable database.

Your MLS Data Isn’t Just for Listings—It’s for Google’s Brain

Search engines no longer just read keywords; they seek to understand entities. An entity is a distinct person, place, thing, or concept. “123 Main Street,” “$500,000,” “4 Bedrooms,” and “Elmwood School District” are not just strings of text on a page; they are unique entities with properties and relationships.

Standardized data is what allows Google to identify these entities and place them within its massive database, the Knowledge Graph. When your website presents data in a clean, standardized format, you are essentially handing Google a perfectly organized library card catalog. It knows exactly what each piece of information is and how it relates to everything else. A website with messy, non-standardized data is like handing Google a messy pile of books with the covers torn off. It will simply ignore it in favor of a more organized source.

The AI Revolution in Search: Why Your Old SEO Playbook is Obsolete

The shift toward entity-based understanding has been accelerated by the arrival of generative AI in search. The introduction of Google’s SGE (Search Generative Experience) represents a fundamental change in how users get information. The era of “ten blue links” is ending.

The new goal of search is not just to rank links but to provide direct, synthesized answers. When a user asks, “What are the best 4-bedroom homes with a pool in the Elmwood School District under $800,000?” the AI doesn’t just look for a webpage with those keywords. It queries its knowledge base for entities that match those specific criteria and constructs a direct answer.

Feeding the AI: Why Structured Data is the Ultimate Fuel

AI models thrive on clean, consistent, and standardized data. It is the fuel that powers their ability to synthesize information and generate coherent answers. A website with a highly structured, standardized, and enriched data feed becomes a primary, trusted source for AI to pull from. Your data becomes the raw material for the answers Google provides.

This is a critical turning point. As I’ve discussed before, the AI revolution is reshaping digital marketing strategies, and those who adapt their technical infrastructure will gain an insurmountable advantage.

The Visibility Threat: What Happens When Your Data is Unstructured?

Here is the risk for every brokerage that fails to adapt: if your site’s data is messy, inconsistent, or unstructured, the AI will simply bypass you. It will find a source that has done the hard work of standardizing and structuring the data—like Zillow.

The result? The AI-generated answer to a query about your listing will cite the portal as its source. Your brokerage, the actual expert on that property, becomes a footnote at best, and completely invisible at worst. You lose the traffic, the lead, and your position as the local authority.

The Blueprint for Dominance: Turning Standardized Data into Search Visibility

So, how do you move from being a victim of this system to a master of it? It requires a deliberate, data-first approach to your digital infrastructure. This isn’t about a simple plugin; it’s about re-architecting how your website processes and presents information.

Step 1: Building Your Digital Twin with Advanced Schema Markup

Schema markup is the vocabulary you use to tell search engines exactly what your data means. While many IDX platforms include basic RealEstateListing schema, this is merely table stakes.

To truly dominate, you must go deeper by nesting entities. This means programmatically connecting the listing to all related entities: the Neighborhood it’s in, the SchoolDistrict it serves, nearby PlacesOfInterest like parks and restaurants, and the RealEstateAgent representing it. This transforms a simple listing page into a rich, interconnected data hub that AI can easily understand and leverage.

Step 2: Creating a Proprietary Knowledge Graph for Your Brokerage

This is where you build an unassailable competitive advantage. A proprietary knowledge graph is a unique data asset created by enriching the standardized MLS data with your own exclusive information. This could include:

- High-production neighborhood video tours.

- Agent-written insights on local amenities and lifestyle.

- Hyper-local market trend data and analysis.

- Floor plans, virtual tours, and other unique assets.

By weaving this proprietary data into your structured schema, you create something the portals cannot easily replicate. You are no longer just another outlet for MLS data; you are the definitive source of comprehensive local real-estate intelligence. This is the essence of mastering first-party data in a cookieless world.

Step 3: The Multi-Site Advantage: Scaling Authority Across Your Brand

For larger brokerages with dozens or hundreds of agents, this data-first infrastructure can be scaled to create a powerful network effect. At One Click SEO, we’ve implemented this for major real estate brands, creating a centralized data hub that feeds a network of parent and agent sites.

Each site reinforces the other. The parent brand’s authority flows to the agent sites, and the unique, hyper-local content and data from agent sites flow back to strengthen the parent brand. It creates a powerful, interconnected digital ecosystem that boosts the authority of the entire brand and each individual agent simultaneously.

Case Study: From Invisible to Inescapable in AI Search

Theories are one thing; results are another. Let’s look at a real-world example.

The Challenge

A multi-office brokerage with over 200 agents had a modern-looking website but was built on a generic IDX platform. Their bounce rates were high, and they had zero visibility in search results for competitive, high-intent terms like “luxury waterfront homes.” They were completely absent from any AI-generated search answers.

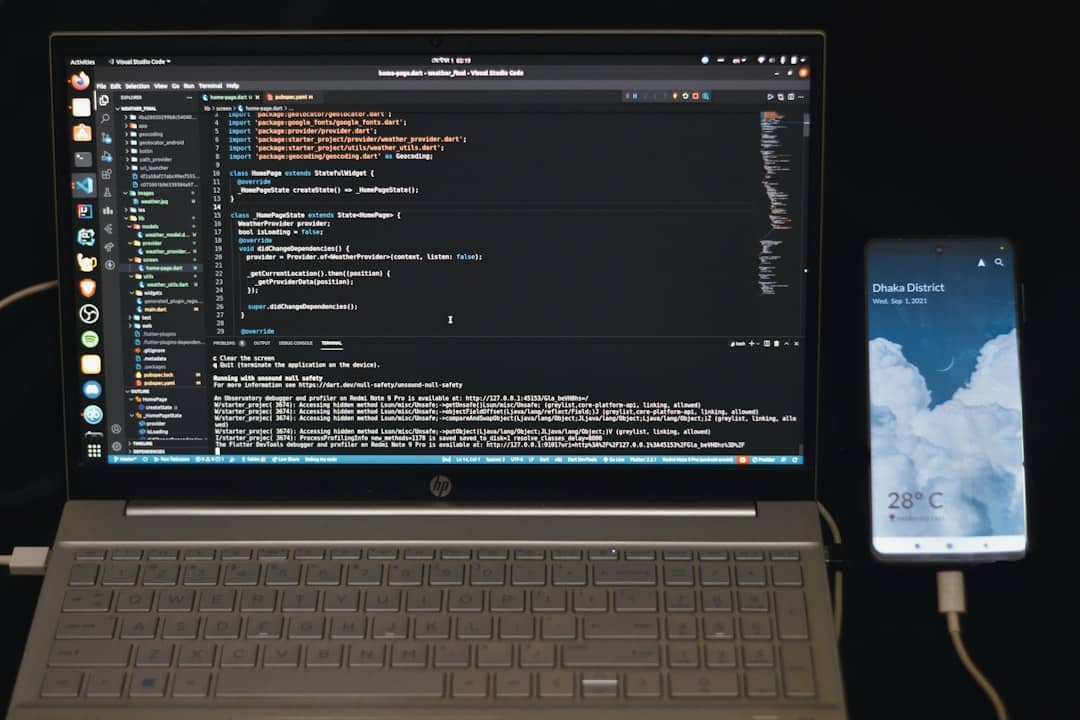

The Solution: Implementing a Schema-Driven, AI-First Platform

We re-architected their entire digital presence from the data up. This involved:

- Processing their raw MLS feed to standardize and structure every data point according to RESO standards.

- Implementing a custom, deeply-nested schema strategy that connected every listing to its neighborhood, agent, office, and a wealth of proprietary local content.

- Building a system that automatically generated unique, data-rich community and subdivision pages, turning them into topical authorities.

The Results: Measurable Business Growth

The impact was transformative and tracked across key business metrics:

- Traditional SEO: Within six months, they saw a 300% increase in organic traffic for non-branded, high-intent keywords.

- AI Search: They began to be consistently featured as the primary source in AI-generated answers for local real estate queries, often with images and links pointing directly to their listing pages.

- Business ROI: This surge in qualified visibility led to a 45% increase in qualified online leads, directly attributable to the new digital infrastructure.

The Future is Written in Code: Is Your Real Estate Business Ready?

The future of real estate marketing isn’t about out-blogging the competition; it’s about out-structuring them. Your MLS data is your most valuable, underutilized digital asset. Unlocking it is the key to not just surviving but thriving in the age of AI search.

While these principles are critical in the hyper-competitive real estate vertical, they are universally applicable. We’ve implemented the same data-first, schema-driven strategies to help clients in complex fields like healthcare and contractor services dominate their local markets. The core truth remains: the business that provides the cleanest, most authoritative data to search engines wins.

This brings us to the final, critical question you must ask yourself: Is your website built for the search engine of yesterday, or are you ready to become the authoritative source for the AI of tomorrow?

Stop competing on keywords and start dominating with data.

If you’re ready to move beyond a standard IDX website and build a true digital asset that wins in both traditional and AI search, schedule your AI-Readiness & Digital Infrastructure Assessment today. Let’s discuss the source code of your success.